How can you make sure you are going to get the max ROI from SEO this year? How to get website on Google first page in 2022 safely? What SEO strategies should you use?

I’ve done in-depth research and come up with proven tips from great SEO experts like Rand Fishkin from MOZ, Brian Dean from Backlinko, and Neil Patel.

They highly recommend that you follow these tips if you’d like to boost your rankings this year.

Let’s consider them one by one.

Crawlable Content and URL

From the SEO point of view, all the content that you have on the page, including the text, images, visuals, videos, etc., should be crawlable. What does that mean?

According to Rand Fishkin, that’s probably the #1 most important factor to consider. If you miss this, you face the risk of not being noticed by Google at all.

Watch this short video with Matt Cutts, where he explains the biggest 3 mistakes that webmasters make. Needless to say, one of them is not having your website crawlable!

Along with producing catchy and interesting content for visitors, a webmaster needs to consider technical issues to make a site visible to search engines.

How to Make a Website Crawlable

What should you do to make your resource, page, or URL crawlable?

Let me disclose here some sure-fire ways to make your site more visible for search algorithms.

When you want to make a website crawlable, it is vital to keep in mind that Google has developed special algorithms and demands concerning the link structure, how the URL should look, and the way the sitemap is structured.

Here is the checklist for making your website crawlable with the help of Webmaster tools:

- Create the robots.txt file properly

- Create and regularly update the sitemap

- Manage URLs properly

- Manage true redirects

- Add structured data (Schema.org markups, Open Graph, JSON-LD)

- Add meta tags (title, description, images, index/noindex, follow/nofollow and etc.)

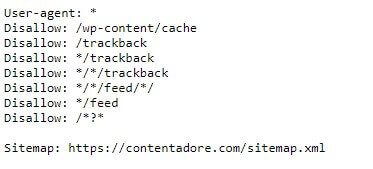

The robots.txt is an important file that contains rules for search engine bots (like Google, Bing or Yahoo) or services (such as Ahrefs, Semrush, or Serpstat). All the bots look for this file first, and then, based on the rules therein, they plan how to crawl the site.

The webmaster can use regular expressions to deny access to some URLs and specify the path to the sitemap. These rules help bots save time and resources for crawling websites without indexing unnecessary pages. This saves crawling budget and improves SEO. For more detailed information, feel free to check this website.

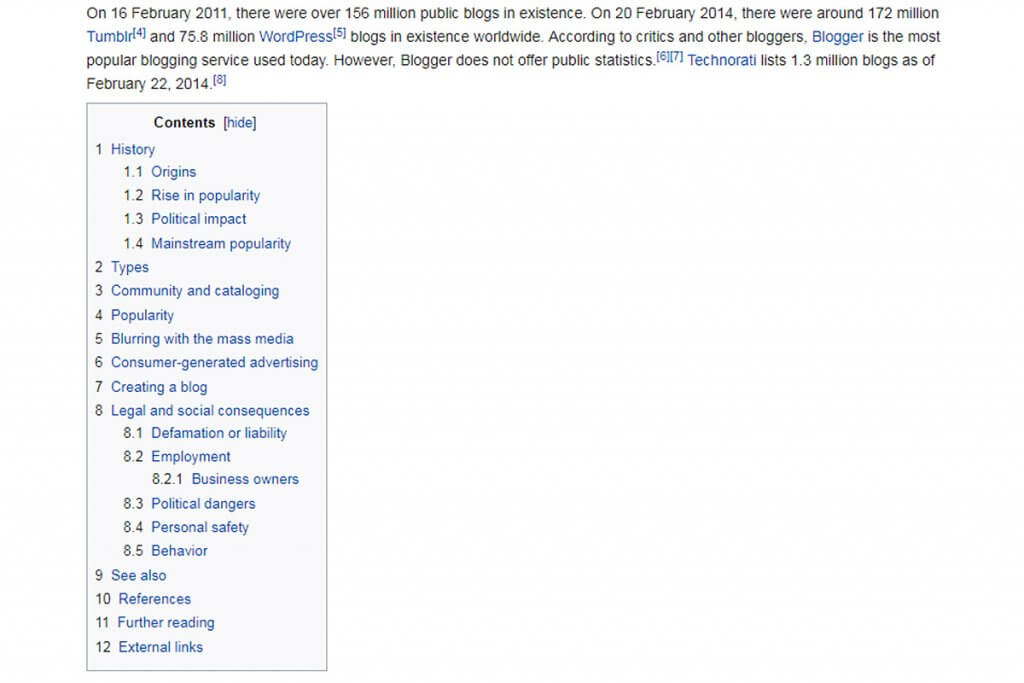

The sitemap is a file that contains your site’s links so that the search robots can index them properly.

The sitemap is a file that contains your site’s links so that the search robots can index them properly.

Of course, you can leave your website without a sitemap.

Technically, a search robot will be able to categorize the links and pages within your resource even without a sitemap.

However, it will take more time. And in this case, there’s no guarantee that all the data will be assessed properly by the crawler.

If you deal with apps, make sure your app is indexed in the Google base.

This is vital if you want to get more mobile traffic.

To increase mobile traffic, you should also be delivering and indicating two options for the URLs of your pages: one for desktop devices, and a variant optimized for mobile devices.

Making Crawlable URLs

Making crawlable URLs is a big part of making a crawlable website.

First, let’s make shorter URLs. Search engines do not like very long links. For them, long URLs indicate that a page could have poor quality and might lead to spamming and endless redirections.

If your site is adapted for mobile devices (it definitely should be, by now) and you have alternative mobile pages (AMPs), make sure you provide the alternative URLs for the robots.

By the way, although alternative mobile pages are not a direct Google ranking factor, they may help you rank better in the Google News Carousel (its mobile version).

If you want to get your content indexed as fast as possible, make sure you add new links to the content in your other pages (in the articles themselves, or in special blocks of the sidebar, footer, etc.). This will allow you to get the specific content indexed first.

You should also make sure your URLs are formatted properly. There are several main rules you need to adhere to:

- The content of the URL should be related to the content of the page it leads to (for example, if there is an URL for the main page, it should look like website.com/main, rather than website.com/1). It’s also beneficial to use keywords in your URLs. It makes them more readable by human beings and improves the clickability of the snippet. However, keyword stuffing brings you more harm than good. It can spoil all your efforts, and your site will look like a spam.

- The structure of the URL should be as simple as possible. The fewer slashes (“/”) there are in the address, the higher the priority of the page will be. Fewer folders will do a better job. Exclude dynamic parameters if it’s possible.

- Do not mess with a subdomain. The higher the level is, the higher the priority of the page is.

Sometimes, you need to move a page to another URL or move a website to another domain or subdomain. For these purposes, you can use three of the most common redirects:

- 301, “Moved Permanently” (this is recommended for SEO)

- 302, “Found” or “Moved Temporarily”

- Meta Refresh

You should ensure that the URLs redirects are set up correctly.

For example:

- If the page was moved, you can inform bots that the URL for this page has changed. Google will then reindex the URL for this page soon.

- If users mistakenly type your URL with double slashes, hyphens, dots, and/or underscores, you can redirect them to the correct URL and avoid the 404 page or double redirection hops (this also applies to slash vs. non-slash at the end of a URL).

- If the website has www as well as non-www domains, or HTTP and https protocols, you can inform bots which path is the main one to avoid having duplicate pages in Google index.

- If the website CMS generates dynamic parameters in URLs, you can set up the .htaccess file for redirection to more readable URLs.

The following are some great tools for analyzing redirects:

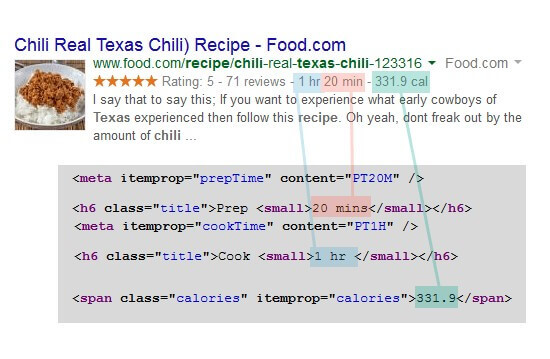

Structured Data and Rich Snippets

This tip is not for everybody. Every time you have an opportunity for a featured (rich) snippet, you should capitalize on it. It may be a good idea to use rich snippets in the travel niche, or if you get a chance for exposure in the Google news.

One of the ways to increase the number of follows when your page link is displayed in SERP is to offer as much relevant information to the user as possible.

Everybody knows that search engines are constantly implementing new tools and approaches to make search results more relevant and user-friendly. On the other hand, doing so, they give website owners more options to promote their resources.

Using structure data for your resource is one of the effective ways to get more displays in SERP, more clicks, and visitors.

The classic structured data definition looks like this:

Your list of data must be categorized in a specific way. Structuring data lets the search engine categorize your page content more correctly.

This, in turn, allows you to display the most relevant data to users in the SERP.

If you manage to structure your data properly, it will appear as a rich snippet in the SERP.

How to Make Structured Data

Setting up structured data for SEO is rather easy. Just create a separate file and submit it for search robots. In the file, point out data that needs to be categorized.

As it’s rather challenging to define the complex standards that are used by algorithms, you can use tools to assess your efforts on optimization and categorization of content.

Such tools could include a structured data report, a testing tool, and a data highlighter.

Using these metrics, you can get full information on whether the operation was performed as you intended and whether the crawler will assess it properly.

Structured Data Types

In the SEO context, a structured data type is defined by the main characteristic used to categorize information on the page.

The markings have a wide range of applications; they can be used for different types of content (video, text), on product pages, in reviews, etc.

If you want to make a file with structured data for your page, you can choose from among three formats:

- JSON-LD – using the JavaScript programming language

- Microdata – used to structure HTML content

- RDFa – HTML5-based format

Structured Data vs Unstructured Data

Structuring info on the page surely offers more advantages and opportunities for your visitors – especially if you manage to execute it properly and get a snippet in the SERP.

Structured data markup is not a direct ranking factor, however, it can bring you some SEO benefits. It can help your page get indexed more easily.

Here are the advantages of making a categorized section for the information used on the page:

- it makes your resource more crawlable for search engine

- it provides more valuable and relevant information for users

- it initially increases the number of relevant views and reaches for your site

How to Display Snippets

Snippets can help you get more clicks to your resource when its link appears on the search results page. Snippets allow users to establish whether the page content is relevant enough to what they want, compared with the other options their search returned.

If you run a blog, consider that creating rich snippets to blog posts seriously increases your chances of getting more page views.

As an example, here are three commands in HTML you can use to create a snippet:

- itemscope – pointing out class-based parameters for the described item

- itemprop – definition of the displayed piece of data (URL, price, etc.)

- itemtype – naming the described primary object

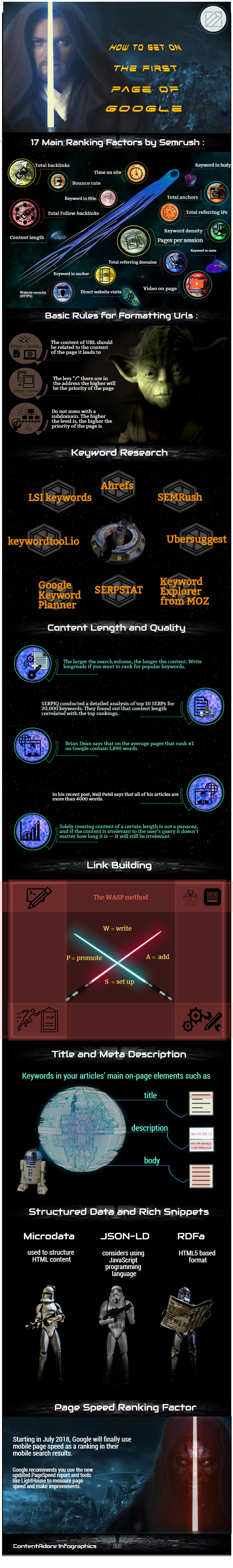

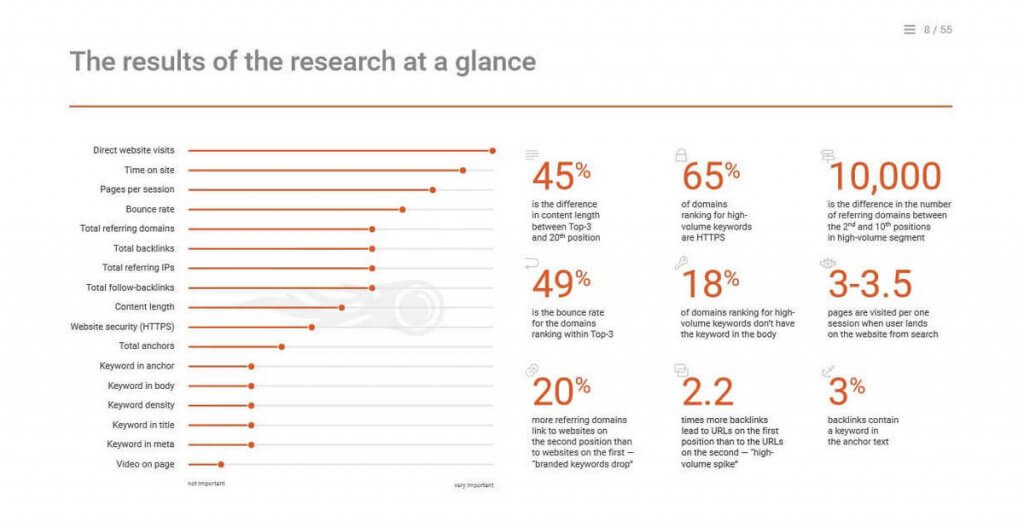

Page Speed and SEO: Speed Is Important

Nobody likes to spend time waiting. The same is true when it comes to browsing the web. If you want to rank highly, optimize your website in terms of loading speed and user experience – otherwise you risk falling behind your competitors.

Bing and Google treat page loading speed as a ranking factor. Crawlers estimate the average page load time for your site based on your page’s HTML code.

Why Improve Website Performance?

When we are talking about website performance, one of the most important metrics we need to consider is user behaviour.

It’s all about how satisfied the user is with the resource he or she has visited.

Page speed optimization is vital to allow the users to reach the information they need as quickly as possible.

Years ago, when the Internet was available through dial-up, we were ready to wait minutes for content to be loaded.

However, today, when we have an access to a broadband connection, we get irritated if loading takes any longer than a few seconds. Thus, a page with poor speed optimization could be a reason for users to leave your site. That signals to Google that visitors are not happy with what they experienced at your site. As a result, your bounce rate increases and you are far away from making your website appear on the first page of Google.

The same thing occurs if the content (like video clip or music) is not launched instantly after being started.

Thus, any webmaster needs to increase page load speed to comply with these requirements. Google understood this years ago when they announced that Google page load speed will influence rankings.

Share This Graphic on Your Site

Please include attribution to ContentAdore with this graphic.

<a href="https://contentadore.com/website-appear-first-page/">

<img src="https://contentadore.com/wp-content/uploads/2021/12/how-to-get-on-the-first-page.jpg" title="Ranking Factors - ContentAdore Infographics" alt="Image of Ranking Factors - ContentAdore Infographics" width="540px" border="0" />

</a>

Overview of Page Speed Ranking Factors

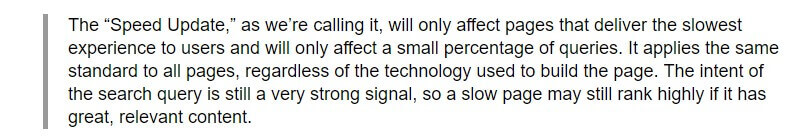

Google’s Doantam Phan and Zhiheng Wang revealed the following:

Actually, there is no clear understanding as to what exactly was meant by the “page loading time”. Studies have been unable to show any direct correlation between website load times and SERP position, likely due to the numerous other factors that affect your rankings.

Here is an overview of three technical metrics that can be used by a web page speed grader:

- Time to the first byte (TTFB) – the overall period of time it takes for the first byte of website data to be received by the user

- Document complete load time – the overall time it takes to load enough data for the page to be operational

- Fully rendered load time – the time needed for all data to be loaded by the user

It has been determined that TTFB has the greatest influence on the page ranking. Metrics like document complete load time and fully rendered load time don’t seem to play as significant a role for the user. The optimal time to the first byte should be 200 ms.

This may seem strange, as one would logically assume that from the perspective of the user, document complete load time, at least, would be the main factor; even the fastest TTFB doesn’t guarantee that you will have the impression that a website has loaded quickly. Users need to get access to the content to make such a determination.

Page Speed and SEO

You need to decide how important it is to speed up the web page.

It could be argued that it is critically important based on Google’s announcement that pages that perform poorly for mobile devices will be penalized and downgraded in the SERP.

However, there is still no clear coefficient that can be used to calculate the influence of page speed ranking by Google.

Your goal should be to optimize page speed for the website such that you can guarantee your resource won’t be downgraded as a result of slow page speed.

Tools for Website Performance Analyzing

Here are some good tools that you can you use to get a clear picture of your site performance:

How to Increase Page Load Speed

Every component present on your page requires a separate HTTP request to be received before it can be downloaded. And since every request needs time to be processed, the more requests, the more time it will take a user to load your page. Thus, the first thing to focus on to get your website speed up is minimizing the amount of different components in it.

In order to achieve that goal, you should minify and combine the files on your page. Minifying simply means eliminating all the unnecessary whitespaces, formatting, and code from your files. Combining your files is just what it sounds like: for example, you could join different Javascript or CSS files into one.

If you have a WordPress website, the simplest and quickest way to achieve this is using the “WP Rocket” plugin or a similar one. You’ll be able to minify and combine your content by just checking a few boxes.

After that, make sure to optimize your page images, keeping the total amount of bytes as low as possible. Again, if you use WordPress, you can use helpful plugins such as WP Smush to do the job for you right when you upload the images.

Finally, to improve your page speed analysis, be sure to enable Gzip on your site. It is the most widely used compression method at the moment, and on average it cuts away more than half of your site’s response time.

Now that you’ve optimized the elements on your page, it’s time to leverage the external components for a good page load time, too.

First of all, consider investing in a private server. Shared hosting can get you up and running. It’s very cheap, so it can suit your needs well in the beginning. But as soon as your site performance starts to slow down because your hosting can’t sustain the traffic, you should acquire a private server – there’s no way around it.

Sometimes, though, even a fast server is not enough, if its location is far away from the users. For that reason, Content Delivery Networks (CDNs) were created.

CDNs are a collection of servers distributed across different locations in order to let your users access your site faster wherever they are. Of course, these are paid services, but if your site is growing, a CDN is quite a reasonable investment.

Website Performance Optimization Insights

Over the past few years, Google has been constantly implementing changes that make the Internet more mobile-friendly, and this needs to be considered when making efforts to improve website performance.

A mobile-responsive design has become a must, and you can’t afford to be left behind. You can use mobile plugins on WordPress or create a mobile version of your site using such services as bMobilized or Duda Mobile. But bear in mind that these should be just temporary solutions.

Ideally, you should have a responsive design with an overall mobile-first approach. Basically, you should think this way: “If I don’t need it on mobile, should I put it on the desktop version?”

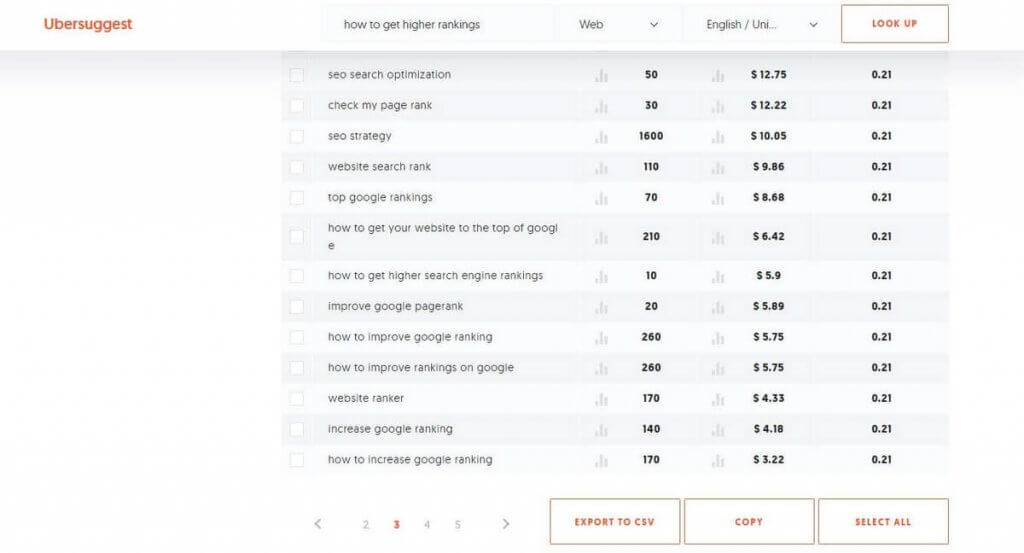

Keyword Research

You should do some thorough keyword research to get keywords and phrases that are best suited to the content/product/service that you offer your site visitors.

You should do some thorough keyword research to get keywords and phrases that are best suited to the content/product/service that you offer your site visitors.

Remember that once users enter a certain keyword, they want to see a relevant solution or answer to their query.

If you use this strategy, it will bring the most powerful effect.

How to Perform Keyword Research

Using the most popular keywords can be challenging if you want to get the first position in Google because of the stiff competition. What should you do to get the most out of keyword research?

Here is a brief keyword research checklist suggested by Tim Soulo from Ahrefs:

- Test your article ideas for “search demand”

- Determine the full traffic potential of a keyword

- Find the best keyword to target

- Determine your chances of getting the first spot in Google with this keyword

While building your keyword list, pay attention to the following metrics:

- Search volume. Look for one keyword with a high search volume and the least possible competition. Use that as your main keyword. This means that you need to put it in your Title, H1, and in the first paragraph of the text (ideally, you should use it somewhere within the first 100 words of your article). Choose a couple of keywords with medium search volumes and low levels of competition. Use these somewhere in the middle of your text. As for the words with the lowest search volumes, use them at the end of the article. Avoid keyword stuffing. All the keywords should flow naturally through the article.

- CPC. This stands for cost per click. CPC will give you a clear picture of the prices for paid ad campaigns in Google. Here is a small tip. Identify keywords with the lowest levels of competition and the highest CPC, and use them for organic rankings. If they work well for paid advertisement, it means they can bring in a lot of traffic.

- Competition. This shows you how contested this or that keyword is. Try to find keywords with lower levels of competition and use them in your content.

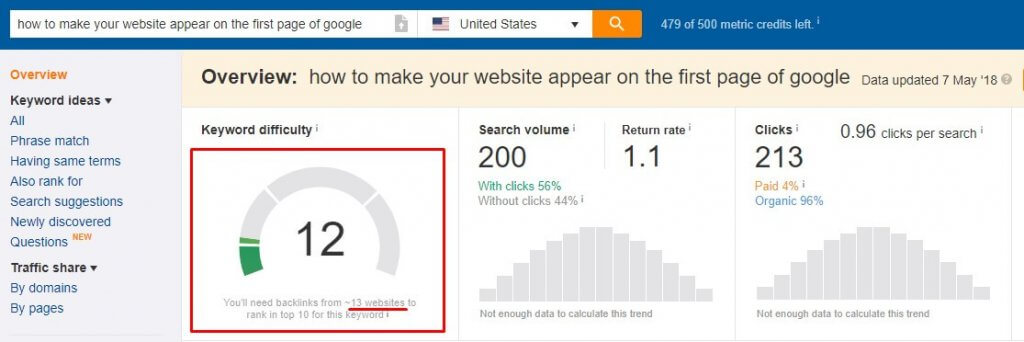

- Keyword Difficulty. This metric estimates how challenging it would be to make your website appear on the first page of Google using the chosen keyword. The Ahrefs Keyword Explorer tool shows you the approximate amount of backlinks that you would need to achieve the Top 10 in Google.

Which Tools to Use

There are many keyword research tools available. Here is a short list of the most popular tools – the majority of SEO hackers use these daily:

- Ahrefs

- SEMRush

- Ubersuggest

- Keyword Explorer from MOZ

- LSI keywords

- SERPSTAT

- keywordtool.io

- Google Keyword Planner

It might be a good idea to use Google Trends for your keyword research, as well.

Look for things related to your niche and that are trending.

If you create good content on these topics using related keywords, you’ll have great odds of getting some extra traffic to your site.

The best way is to try each of them and discover what works best for you.

Competitor Research

The next step is to perform competitor research and find holes where you can fit. For this, just enter your keywords in Google and check what the SERP shows you.

Here, you should pay attention to the content that Google finds the most relevant to your search. Analyze it, and make sure you’ll be able to deliver even better content.

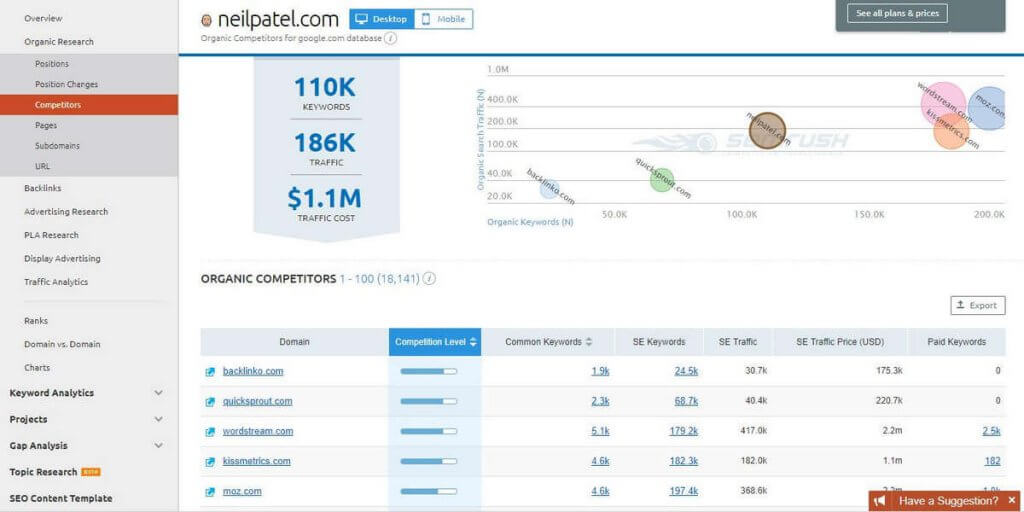

There is a powerful strategy that Neil Patel uses for his own projects.

First of all, go to SEMRush. Check the Domain Analytics section in your Dashboard, and choose Competitors there. Then input the URL of your main competitor there, and click the Search button.

Voila – you’ll be presented with the detailed data on traffic, amount of keywords, traffic costs, and info on all other competitors.

Then, go to Ahrefs to see the detailed information about backlinks.

Find competitors that rank highly but have lower amounts of backlinks. Check all the sites that they get the backlinks from.

Create even better content than that of your competitors. Reach out to those sites and offer them your content.

Tools for Competitive Analysis

Here are some of the most popular competitor research tools that can help you focus your SEO efforts in the right direction.

- Ahrefs

- AdBeat

- BuzzSumo

- SEMRush

- SimilarWeb

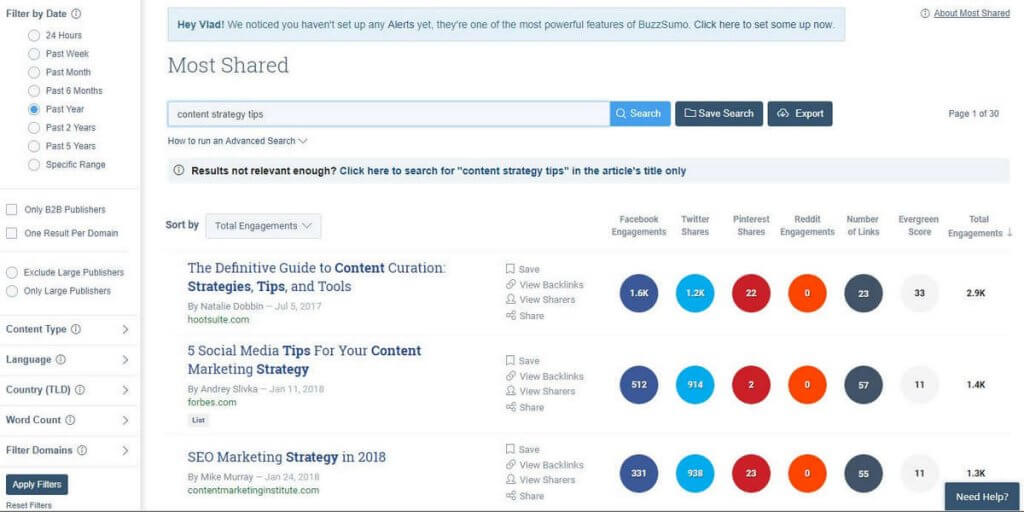

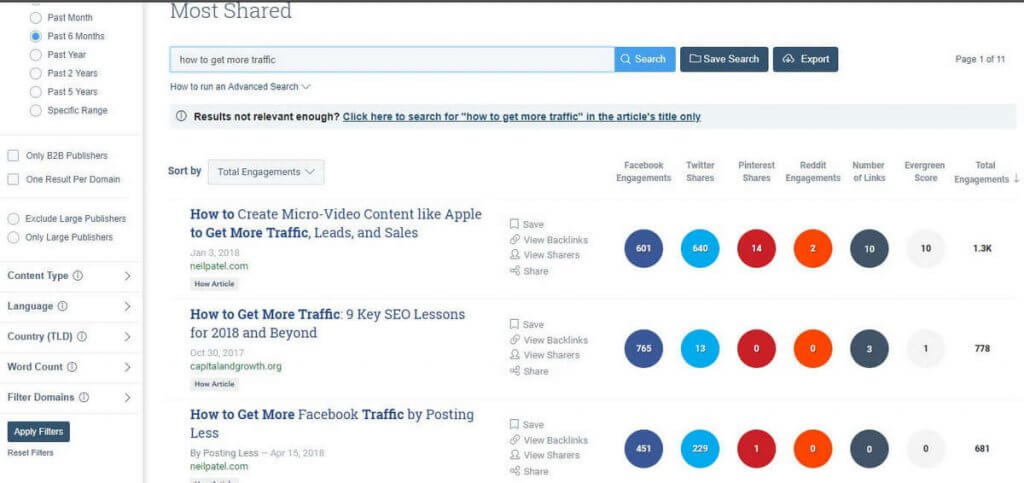

Let’s take a quick glance at Buzzsumo.

This is one of the best ways to remain on top of trends.

No matter what industry you’re in, just put in the keyword you want to explore and hit the Search button.

The tool will give you the most-shared articles that include that keyword (or a slight variation of it) in their titles.

In this way, you can build a strong content strategy.

It’s a great source of ideas for new topics and blog posts, too. Use this tool to see what’s already working.

Find the topics people love, and add your spin on them. It’s simple.

On top of that, you can check and see who shared that content on social media.

Thus, if you want to outreach to influencers to promote your similar content or set up a partnership deal, Buzzsumo a great way to start.

It will show you the top influencers in your niche in a matter of minutes.

Finally, you’d probably like to know when an article on a certain topic or keyword comes out.

It’s crucial not only to monitor your SEO competitors but also to see what information you haven’t included in your post or article.

Set up Buzzsumo to send you alerts every time a competitor publishes a new blog post and/or when somebody publishes a post mentioning your keyword.

The ability to track and analyze the performance of both your competitors’ content and yours alongside each other is a very useful option.

All in all, the best thing you can do in terms of competitive analysis is to find a gap in the search queries and content available.

Find out what is missing at the moment that people would like to know about.

The next step is to create in-depth and top-quality content on your chosen topic.

Content Is Still the King

Neil Patel says that creating great and useful content is the best strategy to follow in our digital marketing era.

Neil Patel says that creating great and useful content is the best strategy to follow in our digital marketing era.

According to a study conducted by eMarketer.com, 60% of marketers tend to publish at least one piece of content each day.

What kind of content do you need to get on the first page of Google in 2022?

Of course, it should be unique and relevant. It should be a long read. It should be useful for your visitors.

Ideally, when the Internet user comes to your page, he or she should go no further.

This means that you need to provide your visitors with content that matches their queries the best way.

The information that they find on your site should cover all the important aspects of the topic. Only in this case they will like and bookmark your page.

The article should provide them with detailed and well-researched answers to the most important questions surrounding the topic.

To achieve this, your blog post should be a long read. It’s impossible to explain everything in detail in 500 or 700 words, right?

How many words should you aim for?

Let’s figure it out with the help of statistics and the top SEO influencers.

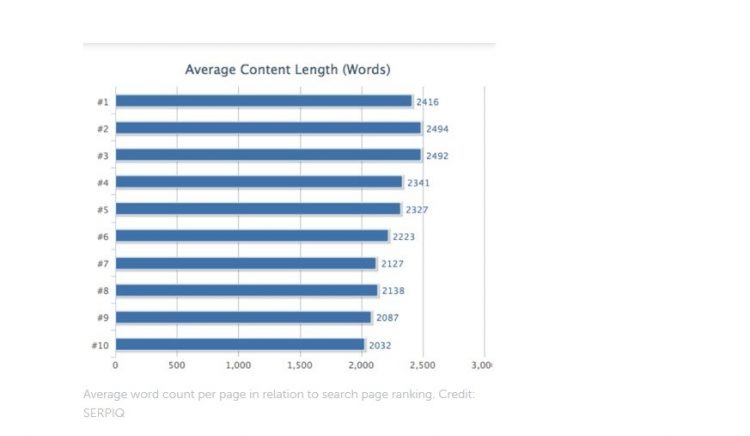

SERPIQ conducted a detailed analysis of the top SERPs for 20,000 keywords. They found out that content length was correlated with high rankings.

Brian Dean says that on the average, pages that rank #1 on Google contain 1,890 words.

In his recent post, Neil Patel says that all of his articles are more than 4,000 words.

However, you need to understand that long-form content doesn’t guarantee you top rankings. It’s just one of the factors that can help you improve your rankings.

Naturally, creating articles of some target length isn’t a magic pill. Your content should be relevant to the user’s query and provide the best answers for them. If it’s irrelevant, it will be useless for the user. In this case, it doesn’t matter how long it is; it won’t do any good whatsoever.

Top Benefits That You Can Get from Long-Form Content

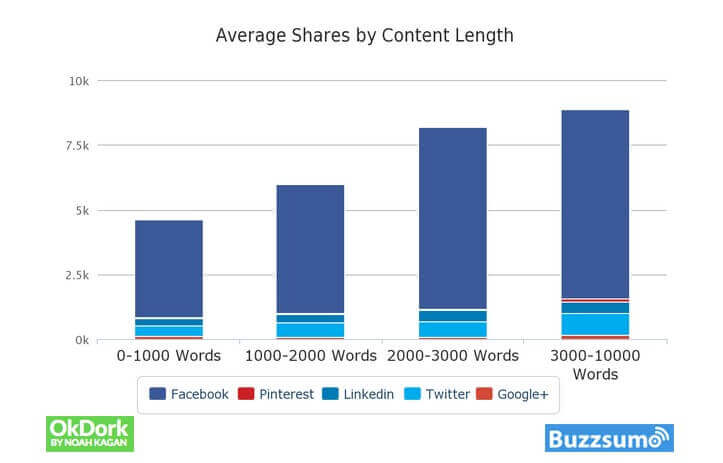

Long-form content is a powerful share trigger. More people are likely to share your blog post if it gives them in-depth info on the topic.

If your long-form content provides value to the readers and contains LSI keywords, it can bring traffic to your site for a long time. Creating high-quality long-form content is a very smart strategic move.

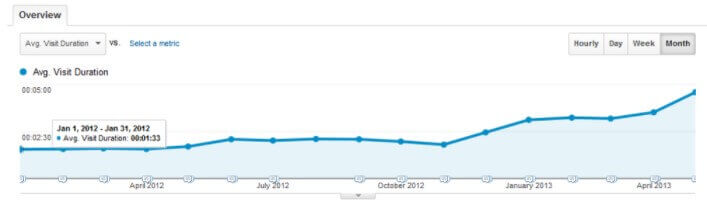

Long-form content increases user engagement and on-page time, which are very important ranking factors. Check out how WordSteam managed to succeed in implementing this approach on their blog.

7 Reasons You Want to Have High-Quality Long-Form Content on Your Site. This content:

7 Reasons You Want to Have High-Quality Long-Form Content on Your Site. This content:

- Increases the time that readers spend on your site (you need to provide valuable and in-depth answers to the user’s query).

- Ranks well and gets more traffic from search engines.

- Promotes itself (your article will get more links naturally).

- Gets more shares (more people tend to share long reads on social networks).

- Establishes you as an authority in your niche.

- Is a good way of building and establishing your brand.

- Helps you build your own tribe (long-form content will build trust between you and your readers and make them want to subscribe to your newsletter).

- Increases conversion.

How to Make Your Website Appear on the First Page of Google: Level Up Your On-Page SEO

On-page SEO has evolved dramatically for last few years. Google BERT update and introduction of NLP have changed the game.

It’s no longer enough just to do your keyword research and insert long tail keywords here and there in your content.

The solution? You should turn to a data-driven content writing and content marketing using tools like SurferSEO.

Have no time for creating high-quality content that gets high scores in SurferSEO, matches user intent, and helps your website rank high in Google?

Turn to our SEO copywriting services now and let us do the heavy lifting for you!

Link Building with Infographics

Backlinks have been always an important ranking factor. It’s impossible to rank well without backlinks. Even, if the site

What is the best link building strategy to boost your site positions in 202? According to Neil Patel, the following approach should work very well.

- Find extremely popular articles. For this, check BuzzSumo and enter the keyword you have in mind. In a few seconds, it will show you the most frequently shared posts on social platforms.

- Choose the blog post with the largest amount of likes.

- Create infographics based on the content posted in the article.

- Reach out to all the people who linked to it.

- Ask them to share the infographics as well.

What is the secret to Brian Dean’s success? It’s the so-called WASP method. It stands for

W = write

A = add

S = set up

P = promote

You should understand that it’s not sufficient just to write the best article and post it on your site.

Once you publish it, you should start promoting it as actively as possible.

Title and Meta Description

Meta tags are still going to be one of the essential things that you need to pay attention to when it comes to SEO.

Yes, meta tags can be a great way to attract your visitors’ attention and make them want to click and visit your site. As Kevin Gibbons discusses in this article, you need to test meta descriptions and title tags to improve your click-through rates.

Make sure your title and meta description are properly optimized and capable of grabbing users’ attention.

Keyword stuffing will bring you no good. However, it’s still effective to include keywords in your title, meta description, and body.

How to Optimize Meta Title and Meta Description?

Search for your keyword on Google and check the Title and Description of the ads.

Businesses that run ads spend thousands of dollars every month to get the clicks you want to get for free.

For that reason, they perform extremely thorough optimization, testing different Titles and Descriptions to see what works best and ultimately using those combinations of words.

Why don’t you use those already tried-and-tested combinations? Compare the different ads and see what words are used more often, then implement them in your meta tags. It’s as simple as that. They are proven to work.

The second CTR trick is to use power words, such as today, right now, fast, step-by-step, quick, easy, simple, etc.

The reason this works so well is that we all, intuitively, are looking to get a positive outcome faster and with less effort.

Thus, words and phrases that bring to mind that kind of experience have more chances of grabbing our attention immediately.

Finally, make sure your content is actually relevant to your keyword, helpful, and easy to read.

If you attract people to click on your result but ultimately them a poor experience with your content, they’re going to close your page immediately, giving Google bad signals.

This will drop your page down in the rankings and reduce your organic traffic.

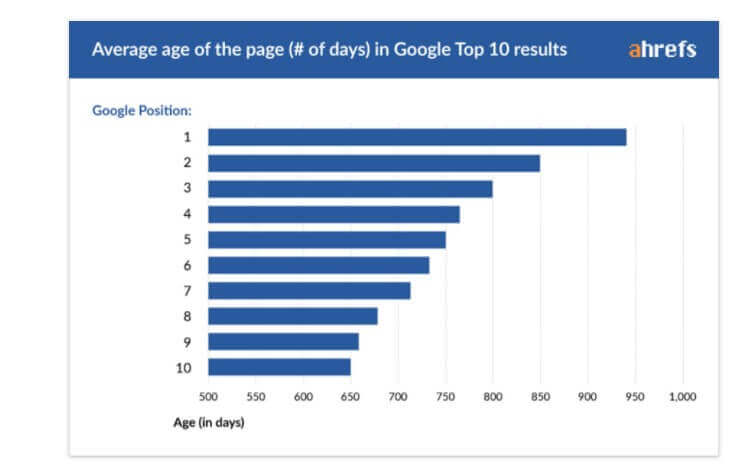

How Much Time Do You Need to Rank in Google?

Well, no one knows the 100% exact answer to this question.

That’s because Google and other search engines tend to use dozens and hundreds of factors in their ranking algorithms to identify the most suitable sites to match user queries in the best way.

Moreover, making your website appear on the first page of Google depends on budget, skill, website characteristics, competition, etc. When ranking, Google does tend to give the advantage to older sites.

According to a study conducted by Ahrefs, no more than 5.7% of recently created sites will reach the top of Google SERPs within one year.

Meanwhile, only 0.3% of fresh sites will get top rankings in one year or faster.

This means SEO is not a roulette. It’s not about luck. It’s more about expertise, knowledge, and persistence.

Conclusion

Now you know lots of great tips on how to make your website appear on the first page of Google in 2022. It’s time to implement them. There is no magic pill or secret tactic that can help you trick users or Google. The best thing you should do is to put efforts into creating a good website, create awesome content for it, promote it and get backlinks to it, develop your product/service, make your clients happy, develop your brand.

Do you have anything to add to this list?

Feel free to express your opinion in the comments below!

Subscribe to our newsletter and get awesome hands-on SEO, content and digital marketing tips on a regular basis.

(5 votes, average: 4.40 out of 5)

(5 votes, average: 4.40 out of 5)

Thank you for article and valuable information. I have taken a lot for myself and will use it in SEO practice. I see there are many tools described in the article. Tell me please, which programme on your opinion is the best to realize website analysis, backlinks building, redirects, etc. Whether it is possible to stop on one software or there is necessity to use couple of it?

Thank you for your positive feedback, Judy. I’m really happy you like the article. I thing there is no one best tool for this. It’s a good idea to combine several tools. As for website analysis, backlinks I prefer Ahrefs and Serpstat. SEMrush can be a good tool to go as well. Feel free to try several tools and decide which one works best for you.

Great staff. I like part of the article referring keywords. I have heard a lot referring to Artificial intelligence and its ability to substitute keywords and its significance in the nearest future. However for now there is just an opinion, which possibly will come into reality in the future. Which are the most important metrics need to pay attention, choosing the keywords?!

Thanks, Jose. The most important metrics when it comes to choosing the best keywords are: 1) competition (it should be as low as possible), 2) search volume, 3) keyword difficulty (try to find the keyword with the lowest keyword difficulty) 4) aim at LSI keywords

I hope this will be useful.

Incredible interview, well done!! I see that quie a lot is devoted to technical SEO, which is really important. I use Yoast plugin to generate robot.txt files, manage redirects, generate sitemap, etc. And what is your opinion? Which tool is the best to make technical SEO perfect?

Thanks Stas.) Yoast plugin is a good tool as it gives you a list of suggestions that you should follow to improve your technical SEO. These 2 tools are also good: https://varvy.com/

https://sitechecker.pro Feel free to check them.

Great information. Very useful for beginner.